Programmatic SEO with AI: Build 100+ Pages Without Thin Content

How to build programmatic SEO pages that rank - the framework I use for SaaS and content businesses: keyword research, data sourcing, AI generation, and QA.

Programmatic SEO with AI has a bad reputation, and most of it is earned.

The default implementation is: scrape some data, slot it into a template, generate 10,000 pages, get de-indexed in three months. That is not programmatic SEO. That is a thin content factory, and Google has been penalizing this pattern since the Helpful Content updates.

The version that works is slower to set up, produces fewer pages, and requires real strategy. It also ranks, converts, and keeps ranking.

This is what that version looks like.

TL;DR: the framework that works

- Start with a keyword pattern that has clear intent and enough volume.

- Build a structured data source that makes every page genuinely different.

- Use one strong template with variable sections, not one rigid block of generic text.

- Use AI to expand data into helpful copy, then run similarity and fact QA before publishing.

- Track indexation and query performance in Google Search Console at the sitemap level.

If you want this implemented end-to-end, see Programmatic SEO service.

What programmatic SEO actually is

Programmatic SEO is a system for generating a large number of landing pages from a structured data source + a content template, targeting keyword patterns that share the same intent at scale.

The classic examples:

- Zapier’s integration pages:

"Connect [App A] with [App B]"- ~50,000 pages, each targeting a specific integration pair - Nomad List’s city pages:

"Living in [City]"- hundreds of pages, each with real cost-of-living data - G2’s comparison pages:

"[Tool A] vs [Tool B]"- targeting high-intent comparison searches

What these have in common: each page is genuinely different because the underlying data is different. The template structures the page; the data makes it unique.

What fails: pages that are identical except for a swapped keyword, with no real data or useful content behind them. Google can usually tell the difference, and those pages are far more likely to be ignored or de-indexed.

When it’s worth doing

Programmatic SEO makes sense when:

- There’s a clear keyword pattern - a template like

[Tool] + [Use Case],[City] + [Service], or[Product] + [Integration]where each combination has genuine search volume - You have or can build a structured data source - a database, API, or dataset that can populate each page with unique, useful content

- The pattern has enough volume to justify the system - building the pipeline takes real time; you need at least 50–100 viable page targets to make it worthwhile

It doesn’t make sense for general content marketing, for topics where every page needs original research, or as a shortcut when you just don’t want to write.

The stack that doesn’t get you de-indexed

Here’s the approach I use, broken into the parts that actually matter.

1. Keyword research first, data source second

Most people start with the data they already have, then try to force keywords around it. Better approach: start with keyword patterns, validate search volume and intent, then find or build the data that makes each page unique.

For a B2B SaaS client, the keyword pattern that worked was [Their Tool] + [Competitor Tool] + integration. The searches are: people who use Tool X and want to know if it connects to Tool Y. Each page answers that specific question with real setup instructions and data field mappings. Once the pages were indexed, they started showing up in GSC for the exact integration-pair queries we’d targeted - not thousands of visits, but consistent, qualified traffic from people actively looking for that specific connection.

Google Keyword Planner gives you volume estimates. Ahrefs or Semrush gives you competition context. You want patterns with volume where current results are weak (listicles, forum threads, or thin affiliate pages). That is where a well-structured programmatic page can rank quickly.

2. Data sources that produce genuinely unique pages

The data source is what separates content that ranks from content that gets filtered. It needs to produce page-level differences that a user can actually see and use.

Good data sources:

- Public APIs - product specs, pricing, feature sets (pull and store, don’t call at render time)

- Structured databases - your own data, third-party datasets, curated CSVs

- User-generated content - reviews, ratings, feature requests (if you have access)

- Scraped and structured data - legal and relevant to your domain (Python + BeautifulSoup gets you most of the way there for simpler targets)

Each page needs at least one data point that no other page shares. If the only difference is the city name or the product name, that’s a thin content problem waiting to happen.

3. Template design: structure + specificity

The template does two things: it gives the page consistent structure that search engines can parse, and it gives the data room to show up as specific, useful content.

A template that works has:

- A clear

<h1>that includes the primary keyword naturally - A lead section that answers the most common version of the query directly

- Data-driven sections that are different on every page (specifications, comparisons, setup steps)

- A consistent CTA that connects the content to an action

A template that fails: one that’s so rigid that the output looks identical across pages, just with a different noun swapped in.

For the integration pages example: the page structure was the same (intro, setup steps, data fields, use cases, CTA), but the setup steps, data field list, and use cases were all pulled from the API and were genuinely different for every integration pair.

4. AI-assisted content generation with a QA layer

This is where AI helps most, and where it also goes wrong most often.

What Claude does well here:

- Expanding structured data into readable prose - turning a field mapping table into a paragraph explaining what you can actually do with the connection

- Generating varied phrasing for sections that appear on every page but need to feel different

- Writing the intro and conclusion from a prompt that includes the specific data points for that page

What it does badly:

- Generating content when the prompt is too vague - it defaults to generic

- Producing output that’s technically unique but semantically identical across pages

- Catching its own repetition when generating at scale

The fix is a QA layer before any page goes live:

- Similarity check - compare generated text across a sample of pages. If sections are too similar, tighten the prompt or add more data to differentiate.

- Fact check against source data - AI will occasionally hallucinate specifics. Spot-check that what’s on the page matches what’s in the database.

- Human review on the first 10–20 pages - before scaling, read the output. Fix what’s wrong at the template level, not one page at a time.

Here’s a simplified version of the Claude prompt pattern that works for integration pages:

You are writing a landing page section for a software integration directory.

Integration: {tool_a} + {tool_b}

Direction: {tool_a} → {tool_b}

Data fields available: {field_list}

Common use case: {use_case_from_data}

Write 2-3 sentences explaining what this integration does and what a user can achieve with it.

Be specific to these tools. Do not use generic phrases like "streamline your workflow."The specificity in the prompt is what drives specificity in the output. Generic prompt in, generic content out.

5. Launch, sitemap, and monitoring

Once the pages are generated:

- Submit a sitemap containing only the programmatic pages - don’t mix with your editorial content sitemap if you can help it, so you can monitor indexation separately

- Build internal links from your main site to the category or index pages, and between related programmatic pages (e.g. all pages featuring Tool A link to each other)

- Set up GSC tracking at the sitemap level - Clicky or GA for traffic, GSC for indexation status and query data

The first 2–4 weeks tell you a lot:

- If Google is crawling but not indexing, the content quality is probably flagged - review and improve the template

- If it’s indexing but not ranking, the keyword research may have overestimated demand or underestimated competition

- If it’s indexing and ranking, watch for the pages that outperform expectations - they often reveal a better keyword pattern to expand on

Case study: B2B SaaS integration directory

To make this concrete, here’s how the full system looked for a real project.

The situation: A B2B SaaS client had a product that connected to 40+ third-party tools. Their competitors had integration directories. They had a static “integrations” page with a list of logos. Every time a potential customer searched “[Their Tool] + [Competitor Tool] + integration”, they found someone else’s content.

The keyword pattern: [Their product] + [third-party tool] + integration and connect [third-party tool] + [their product]. Each integration pair had a different volume — some queries had 200 searches/month, some had 20 — but collectively, covering all 40+ integrations meant targeting a long-tail cluster with real aggregate volume.

The data source: The product had an internal API with structured data for each integration: supported data fields, sync direction, setup requirements, common use cases. This was exactly the kind of data that makes each page genuinely different.

The Claude workflow:

The generation pipeline used Claude for three specific tasks:

-

Intro paragraph per page — given the tool name, sync direction, and top three use cases from the API, Claude wrote a 2–3 sentence intro that described what the integration does and who it’s for. Prompt required specificity: tool name, actual use case, no generic phrases.

-

Use case expansion — the API returned raw use case labels (

e.g., "sync contacts","trigger automations"). Claude expanded each label into a sentence describing the business context: not just what syncs, but why a user would set it up and what they’d do with the result. -

FAQ section — Claude generated 3–4 questions for each page based on the integration-specific data. Questions like “Does [Their Tool] support two-way sync with [Third-Party]?” — answered using the actual field data, not invented.

The prompt template was the same across all pages. The data passed into the prompt was different for every page. That’s the difference between thin content and useful content.

QA process:

Before publishing, each batch of 20 pages went through:

- Similarity check: comparing the intro paragraphs across pages to ensure they weren’t converging on the same phrasing

- Data accuracy spot-check: verifying that 5 randomly sampled pages matched the actual API documentation

- Manual read of the first 10 pages to catch any prompt output that felt generic

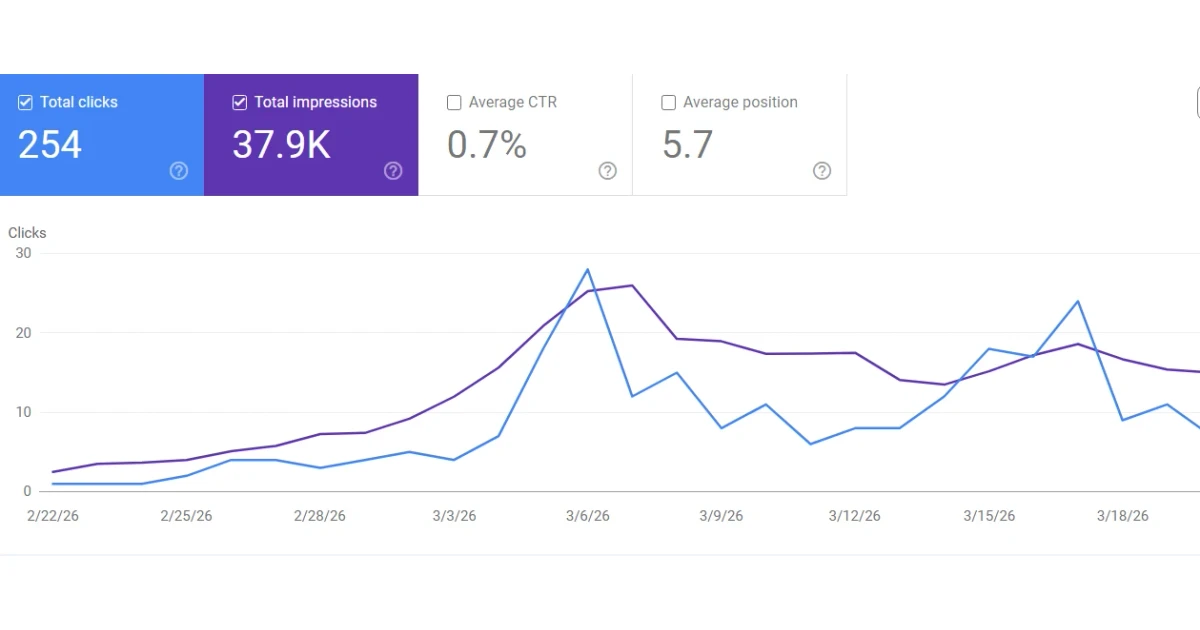

Results after 90 days:

- 38 of 42 integration pages indexed by Google

- 22 pages ranking on page 1 for their primary integration-pair query

- 680 organic clicks/month from the integration directory (from near-zero)

- 4 demo requests attributed to integration page traffic via UTM tags

The highest-traffic pages weren’t the integrations with the largest individual search volume — they were the pages where the competition was weakest: niche integration pairs where the only existing results were thin forum threads or outdated help articles.

The Claude prompt system in detail

For projects where I’m building this end-to-end, here’s the prompt architecture that produces reliable, unique output at scale.

Principle: every prompt includes enough specific data that Claude cannot fall back to generic phrasing. If the prompt could produce the same output regardless of which integration (or city, or product) you’re targeting, the prompt needs more data.

Prompt layer 1: intro section

Write an intro paragraph (2–3 sentences) for a landing page about the {tool_a} + {tool_b} integration.

Facts to use:

- Sync direction: {sync_direction}

- Primary use case: {primary_use_case}

- Data fields synced: {top_3_fields}

- Setup type: {setup_type} (e.g., native, Zapier, API)

Requirements:

- Lead with what a user can accomplish, not what the integration is

- Name both tools by name in the first sentence

- Do not use the phrases "streamline", "seamlessly", or "powerful"

- Under 80 wordsPrompt layer 2: use case expansion

Expand this raw use case label into one sentence for a technical audience:

Integration: {tool_a} → {tool_b}

Label: {use_case_label}

Direction: data flows from {tool_a} to {tool_b}

Write the sentence as: [Who] [does what] [with what result]. Be specific to these tools.Prompt layer 3: FAQ generation

Generate 3 frequently asked questions for the {tool_a} + {tool_b} integration page.

Available facts:

- Supports two-way sync: {true/false}

- Real-time or batch: {sync_type}

- Auth method: {auth_method}

- Known limitations: {limitations}

Format: Q: [question] / A: [answer, 1–2 sentences, factual, based only on the facts provided]

Do not invent capabilities not listed above.The last instruction matters. Claude will hallucinate integration capabilities if you don’t explicitly restrict it to the available data. For factual content, always end your prompt with a constraint on what it can and cannot claim.

What it won’t do

Worth being direct about the limits:

It won’t replace editorial content. Programmatic pages answer specific, structured questions. They don’t build authority on complex topics, don’t earn backlinks the way a good analysis piece does, and don’t give people a reason to share or return.

It won’t work without traffic potential. If you’re in a niche with no search volume for the pattern you’re targeting, generating 500 pages produces 500 pages with no visitors.

It’s not fast to set up. The keyword research, data architecture, template design, generation pipeline, and QA process together take 2–4 weeks to do properly. It’s a systems project, not a content sprint.

It requires maintenance. Data sources go stale. New tools enter the market. Keyword patterns shift. Pages that ranked in year one need updating in year two.

Programmatic SEO with AI FAQ

Is programmatic SEO still worth it in 2026?

Yes, if each page has unique, useful data and a clear search intent match. No, if the plan is to publish thousands of near-duplicate pages.

How many pages should you launch first?

Start with 50–100 high-confidence pages. This is enough to validate crawling, indexing, and rankings before committing to full-scale generation.

Can AI alone run a programmatic SEO strategy?

No. AI can help draft and vary content, but keyword research, data quality, template design, and QA are what determine whether pages rank.

How do I know if my site is a good fit?

The clearest signal: there’s a keyword pattern you could describe as [Variable A] + [Variable B] where each combination has real search volume and you have or can get data to make each page genuinely different. If that’s true, it’s probably worth exploring. If you’re trying to force a pattern onto content that doesn’t have it, the results are usually disappointing.

How long before programmatic pages rank?

It depends on domain authority, competition, and content quality. With a solid data source and well-structured pages, Google typically crawls and indexes within a few weeks. Rankings on low-competition long-tail queries can follow within 1–3 months. Higher-competition patterns take longer, and some never crack page one - which is why keyword research and realistic expectations matter upfront.

Can you build this end-to-end, or do I need a team?

I handle the full system: keyword research, data pipeline, template design, AI-assisted generation, and QA. You’ll need to provide access to your product data or API (or we figure out the data source together). No additional team required on your end.

The actual output

When it works, it works at a scale editorial content can’t match.

A SaaS integration directory that starts with 800 pages targeting integration-pair searches will eventually cover most of the queries in its space - including long-tail combinations that would never individually justify a hand-written article. Individually, each page gets a handful of visits per month. Collectively, it becomes a significant organic channel.

The businesses that have built this well - Zapier, G2, Nomad List - treat it as infrastructure, not a campaign. You build it once, improve it over time, and it compounds.

Building something where programmatic SEO could be part of the strategy?

I design and build these systems for SaaS products and content businesses - keyword research, data pipeline, template system, and QA process included.

If you’re not sure whether it’s the right fit for your situation, send me a message and we can talk through the keyword pattern and data source first. No pitch - just a direct answer on whether it makes sense.

Want help applying this to your product?

If this post matches what you are building, I can help you execute it with clear scope and delivery.